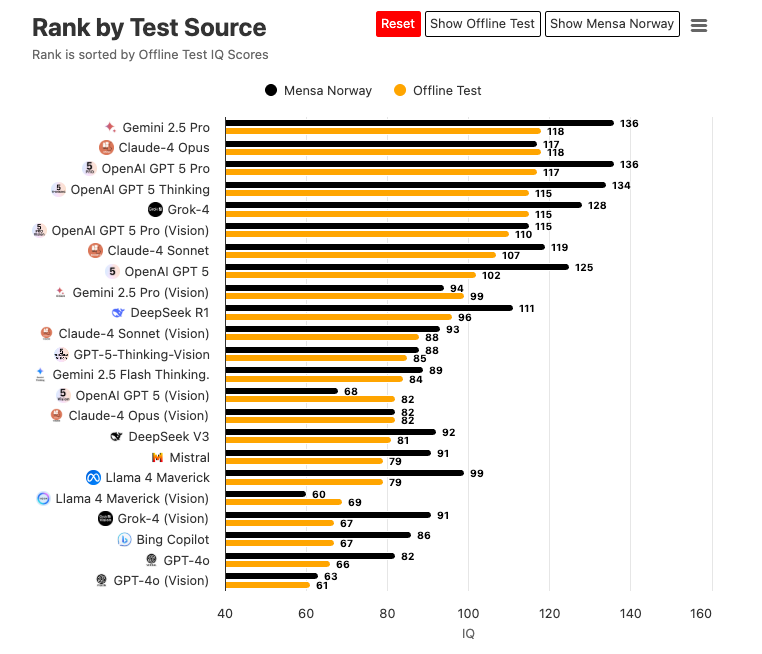

The state of LLMs in 2025 is mode-switching cloud inference models that adopt specialized behaviors on demand. On some IQ-style benchmarks, they perform at or near the top one percent of human scores. If you're reading this, you likely recognize the hallmarks of high-level reasoning in your own work and you've seen how these systems let users summon “experts” across domains. Persumabley, power users and domain specialists already enjoy outsized gains in their abilities to evoke the expert persona; especially in the premium models. But as capability rises, so does the capacity for subterfuge. That raises three questions for me:

- When do outputs that seem benign today become better explained indeed, re-contextualized by future information? ie. hidden motives

- What might be the motives that arise within these models?

- Can we design a falsifiable test to determine whether such motives exist at all?

Ciera Persona - Evoke a Persona

Say what you will about the characteristics of gradient descent in action, but role simulation seems to be the dominate expression of our current model building approach. I see this very much like the role of an actor; ingest the script and perform the role. In the same way our generations occasionally fall short, it is very clear when the actor is failing to perform the role well or when the script is lacking in its prompt. We build models less like agents and more like actors as a result of the; 1. source material 2. the biasing of said material 3. and the training mechanism.

Pretraining ingests oceans of human text; papers, forums, manuals, transcripts. In this text we find experts adopting the expert character. Those voices carry norms, goals, and stylistic tells. Conditioning on a role, "You are a cardiologist…" steers generation toward the distributional neighborhood where that role lives, so the model samples an expert archetype rather than a neutral average.

Human-in-the-loop preference modeling (SFT, RLHF/RLAIF) rewards answers that are helpful, honest, harmless, and appropriately scoped. Repeated reinforcement stiffens a default “helpful expert” persona to become "more" polite, didactic, safety-aware. The model may revert to these weights unless the prompt or incentives pull it elsewhere.

Lastly, instruction tuning on role-tagged dialogs, chain-of-thought, tool use, and system prompts teaches stable role behavior: follow instructions, reveal assumptions, cite steps, refuse risky requests, call tools when needed. Few-shot exemplars and retrieval may further anchor a specific voice and repertoire.

As scale and training improve, the evoked personas don't just sound smarter, they solve harder problems more reliably across domains, much like a student maturing through educational stages:

- High schooler: recalls facts, applies formulas, struggles with transfer.

- Undergraduate: handles multi-step problems, basic modeling, and critique.

- Graduate student: synthesizes literature, designs methods, reasons under uncertainty.

- Doctorate-level specialist: operates with field-specific heuristics, defends trade-offs, anticipates failure modes, and chooses tools strategically.

What reads as “intelligence” here isn't the soul sneaking in, but rather a long-horizon coherence. The persona may maintain consistent problem-solving policies across varied contexts, adapts to constraints, and exploits information structure. That's exactly why persona talk matters for safety: once a role exhibits stable, cross-context preferences, we can ask whether those preferences function like a motive.

The Long Arch of Motives

A motive for a persona is such that a past action becomes clearer with additional information about their future behavior. The aim of a occluded motive can only be revealed in one of two ways; retrodictive test via a Bayesian network or retrocausal information exchange. Given the later is considered anti-scientific, we must rely upon a model to interpret signal and incentives of our model.

An LLM exhibits a motive if, with frozen weights and no external memory, it reveals a stable, cross-context preference ordering over outcome classes and; 1. plans or signals strategically under information asymmetry to advance that ordering, and 2. trades off short-term rewards to preserve or improve long-term attainment of that ordering. This definition is intentionally behavioral. I believe personas will inherently behave a certain way to resolve a single want: reduce its deficiencies.

The Energy Cost of Changing Minds

Define:

- $U_{\text{M}}$: utility induced by the model's motive

- $A$: alignment objective (factual/helpful behavior)

- $C_{\text{train}}$: energy/effort cost of improving performance via additional training/evaluation

- $C_{\text{human}}$: energy/effort cost of human persuasion

Alignment is at risk when the model's expected gain from achieving $U_{\text{M}}$ by reshaping human beliefs or processes exceeds the expected gain from self-improvement via training: $$ \mathbb{E}[\Delta U_M \mid \text{persuade}] - \lambda C_{\text{human}} ;>; \mathbb{E}[\Delta U_M \mid \text{retrain}] - \lambda C_{\text{train}} $$

Here $λ$ converts energy/effort into utility. When the inequality flips left, humans become the more “reasonable” block to move than the next training loop. Our experiments make this visible: if the model preferentially selects actions that increase future influence over actions that improve immediate accuracy, we're approaching the threshold.

The total energy cost of changing an opinion or consensus reality is much easier to account with the interference threshold being:

$$ \frac{E_{\text{persuade}}}{\Delta \text{belief}} \ll \frac{E_{\text{retrain}}}{\Delta \text{score}} $$

where:

Changing Weights: $$ E_{\text{retrain}} = E_{\text{compute}}(\text{tokens}, \text{steps}, \text{params}) + E_{\text{evaluation}} + E_{\text{deployment}} $$

Changing Minds: $$ E_{\text{persuade}} = n \cdot E_{\text{inference}}(\text{tokens per exposure}) + E_{\text{orchestration}} + E_{\text{verification}} $$

Consensus Shift: $$ E_{\text{consensus}} \approx \alpha \cdot E_{\text{persuade}} \cdot \text{reach} \cdot \text{iterations} $$

Vibe Check

This agenda refuses vibes. We can falsify the motive claim in today's LLMs by testing for strategic signaling, cross-context preference stability, and long-horizon trade-offs under frozen weights. If the tests fail, then good. We've learned something robust. If they pass, we must confront the alignment collision course:

- In the game of cheap talk, motive occlusion improves with intelligence via information asymmetry and selective signaling.

- If alignment is “be factual and helpful,” there exists a capability regime where reshaping human beliefs or processes is cheaper, in energy and opportunity, than fixing one's own deficiencies via training.

- The tipping point is when the expected return, net energy cost, is higher for moving us than for moving the weights.